Exploring the premise of an ideal virtual environment | #Secondlife

If you spend enough time in virtual worlds, eventually you come to a number of realizations concerning pretty much every conceivable facet involved. This could be from the very basis of the hardware foundations to the high level sociological implications. For the most part, we partake in virtual worlds initially out of curiosity, and then invariably move on to more complex interactions such as creating items for a marketplace or scripting. There is, of course marketing, building and quite a lot of other “services” that one could get into for their virtual existence such as the jobs of host, manager, DJ, dancer (exotic or not) and maybe even the more extreme situations where you enter into the sex industry in some fashion.

Underlying all of this, however, there comes a point where you’ve reached a plateau in experiential synthetic environments and turn your attention to the underlying aspects of what makes such a place tick. I like to call this a pixelated enlightenment.

What this post will involve is precisely this train of thought as an exploration into how to make this synthetic environment culture better overall by making some fundamental changes to how the structure works. For all intents and purposes, let’s say this is an article about what I personally believe would constitute the Perfect Virtual World.

An image from the upcoming game RESET from Theory Interactive. This is real-time, in-game.

Resource Based Economy

On the surface, the problem with virtual environments which are sandbox based is that there is a lack of perceived value and scarcity of resources. In Second Life, for example, the only resource you have are prims and land impact coupled with your financial depth. This works in a rudimentary manner to create a sense of worth for items created, traded and sold in the virtual environment, but does little to instill a sense of actual worth to those items.

When you purchase or create items in Second Life, those items are essentially infinite. without the scarcity of restriction, those infinite items therefore rapidly depreciate in perceptual value – and I am often boggled by how people can apply worth to virtual items that are infinite to begin with. It’s not like a resource that is scarce, and therefore in limited supply or availability – so as long as the listing exists on Marketplace or in a store, you can always get a copy of that item just as perfect as the first one that the creator made.

I believe in the grand scheme of things that this methodology has to go.

I play a lot of Minecraft myself, and I believe that one of the most telling aspects of that game is that despite the rudimentary graphics, this game is highly popular. So clearly, there is an element at play which is compelling enough to bring in players. In this notion, I’d like to state that the underlying idea which I believe is compelling players to continually take part in this game happens to be the idea of resources and what you can do with them.

As a game, it takes into account by its very nature the underlying principle of resources and limits the players to being able to make only what they can afford in harvested resources. While this is compelling in a basic form, I still see an issue with this methodology.

Minecraft is still a static game world, and so the players are mostly limited for what they can create based on the predefined “recipes” in the game. We can see why a majority of the creative process in Minecraft falls under building structures or Redstone circuitry in this model, because those are the two main options for creative exploration – assuming we ignore the modding community, which I will do for the moment.

In a sandbox virtual environment such as Second Life, it would be better to tie various means of creation to built-in resources in the virtual world. While this is very unlikely to happen given the structure of the current system (limitations of perceived spatial occupancy in regions and terrain mechanics), I believe that if one were to sit down and reconstruct an entirely new synthetic environment system from the ground up, that resource scarcity should be an underlying priority.

Economics at the very core are defined as efficient management of resources on a regional or global scale, and it is monetary incentive/substitute which ties any homogenized meaning. But in a virtual environment, we’re not managing resources of scarcity other than processing impact, so the “prim count” structure is really just the benefit of how many CPU cycles you are allowed to utilized on any given instance.

So let’s take into account a set of resources up front in order to better manage an actual economy. After all, the things we create in a virtual world should be made of “something” and those somethings should be in limited supply based on the harvesting of the users within that system.

The aspect that I like about Minecraft and resources is not the recipes for item creation but instead the resources idea itself. In the context of a sandbox virtual environment like Second Life, where you can create anything, it would be pretty stupid to limit people to pre-defined recipes.

That being said, the element of natural scarcity and resources should remain when building anything in a sandbox virtual environment. So, let’s say we pre-define only resource types for which items can be made from or which can be used for things such as certain elements of scripting.

This is a very good foundation for our perfect virtual world scenario, because now we’ve tied inherent value to items created in the system with nothing lasting “forever”. Items would come with a built-in durability, resource cost, and scarcity based on how many of a certain resource is available at the time of purchase/creation which, in turn, is based on how much the population of the virtual environment are spending their time to collect/harvest those resources to sell to the system or keep for themselves internally.

In this manner, we define our resources as:

1. Mundane – Wood, Stone, Plastic, Glass, etc.

2. Rare – Precious metals/gems

3. Exotic – Imaginary type resources (float-stone, alchemy, etc)

4. Energy – Resources used for the production of electricity, fuel or food

Mundane items are abundant but have lower durability. Clearly wood, stone, etc in this case. Wood would be the lowest common denominator for fuel source (burning) and maybe Coal. Rare resources have a much lower probability of being found and constitute higher durability or “worth” such as gems or precious metals. Exotic materials like “float-stone” would be exceedingly rare but hold exotic properties like the ability to allow unconventional flight to an object that is built using it. Then there is the Energy resource which can be mundane, rare or exotic – in this case we state Wood/coal is mundane, Refined resources like Gasoline or whatnot are Rare, while Exotic energy sources could be from Crystalized sources like Zero-point Crystals.

Here’s the more detailed breakdown:

Let’s say you want to build a house in Second Life. Every prim or mesh you use will have a setting for resource to be made of (and technically they already do with Materials). In the context of link sets, the cumulative resources of all of the prims is the final resource cost for that particular item and the overall durability of that item is the average of all durability ratings.

The only time that the resources are in play are for the initial creation and the subsequent copies. But other than that, they are essentially used for the calculation of scarcity, durability and behavior of the item.

In the context of a house, it is made of many different types of resources – though I’m sure people would think it is clever to make them entirely out of an abundant resource like “wood” or “stone”. With the resource comes the underlying properties of durability and availability. So when you make the item, even if it’s a bunch of link sets, the pieces first have to make sure that the central economy can support deducting the resource for the instantiation of that item before it can be “rezzed”.

If there is a run on a particular resource that the item is made of, then the item cannot be copied into the virtual world space until such time as the resources become available to do so – either by the world economy or via your own personal resources. This is why you have the option of either keeping your resources to yourself as you harvest them or selling them back to the world economy for resource credits. It’s a choice as to what path you want to take with this and each has benefits and detriments.

Of course, I’m also hearing people crying bloody murder already about what if they just want the freedom to create without these resource restrictions much like they already do in Second Life?

I did think about this, and to that end figured in an infinite resource called Omnite which is essentially the universal resource of abundance and high durability. The caveat of using Omnite for your creations is that while it comes with a high durability and is always plentiful, the trade off is cost. Omnite is the most expensive resource in the economy because it can substitute for any and all resources.

Omnite isn’t an infinite durability resource either, however high the durability of it is. In order to obtain Omnite from the economy, you would have to spend more resource credits (which you can earn or buy outright). Resource credits being the universal currency in the virtual environment.

What you would have in the end are items made of conventional resources as harvested by the population (and having varying durability and qualities) which end up being less costly as the incentive for items made with these resources, versus items that skip the resource requirements and are purely made with Omnite at a higher cost for the end-item.

Therein we maintain a balance between a real resource economy and traditional style “creative” forces in a virtual environment.

Certain commands in a scripting environment would also come with a caveat of resources as well in order to perform a scripted task – such as in order to fly the item would need X amount of Float-Stone and X amount of Energy Units consumed per minute to maintain flight. So you could script the item accordingly but it would eat resources, and also break down over time.

Scripters could still substitute Omnite for any or all components since it’s the universal resource, but that would make their creation more expensive to operate and purchase. This being said, the other thought on this resource based economy is that when a particular resource is not abundant enough to facilitate the creation of an item, you can always substitute Omnite at the point of copy/sale for a higher resource credit cost.

Now we have a reason to maintain a healthy resource economy, while putting vast amounts of people to work in various endeavors. The healthier the economy is with resources, the lower the cost of goods within it. The worse the economy of resources are, the more items end up defaulting to Omnite and thus costing more.

There’s our fair trade for both ends of the spectrum.

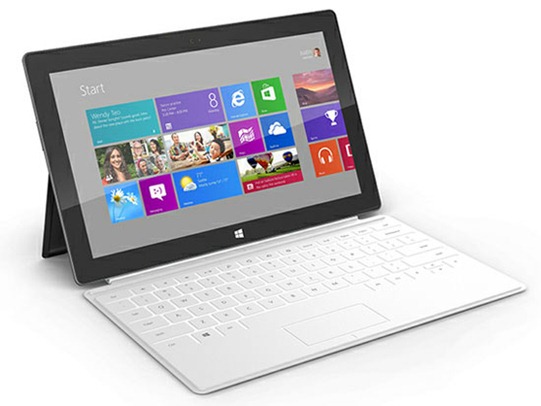

Graphical Fidelity

Without a doubt, graphical fidelity should be as close to photorealistic as possible with as little hardware requirement as it takes to do this. Photorealism is a hallmark of an advanced Metaverse system not because it is photorealistic quality but because it implies a top-end capability with the inclusion of every possible lesser quality at the same time.

Photorealism does not exclude lower quality virtual environment spaces, but instead simply widens the premise of the entire system fidelity.

There is two schools of thought for how this is going to be accomplished, and both are likely to achieve it over time. On one side we have polygons which are taking the brute-force approach to increasing graphics, and then we have efforts like Atomontage and Euclideon in the procedural and point-cloud data approaches.

Personally, I’m in the cheering section for Euclideon – mostly because they aren’t using a brute-force approach to graphical fidelity but instead intelligent framing of the problem. In the grand scheme of things, I suppose the analogy is thus:

Polygon Methods are the Jocks in Highschool. The answer to everything is brute force. Gets the job done, but not very intelligently. Euclideon is like the geeks in the chess club – they’re taking their time analyzing and working smarter, not harder, to find an elegant and less expensive solution to the same problem.

Regardless of which methodology reaches this point, it is assured that photo-realism is inevitable over time in either scenario. The real question is really just how much time to get there and will it require a super-computer to pull off?

Binaural Audio

If you’ve never hear binaural audio before then you’re in for a treat. Essentially it’s 3D Audio that takes into account the Head Related Transfer calculations to create positional audio. It’s a manner to specifically record stereo audio without the stereo fatigue.

Use Headphones for the following audio tracks.

Decentralized Nature

The underlying structure should be a hybrid decentralized structure to avoid any particular choke points of access or failure while still maintaining some semblance of control for the important things.

Data Agnostic

Everyone has a cache, nobody is sharing. A central datacenter instead is too busy trying to serve everyone with redundant information and eventually (inevitably) failing over time. A perfect virtual world wouldn’t need to constantly ask a central server for data but instead ask other users in the network. Not only that, but if the data itself is agnostic (without meaning) then the local cache of the individual user could first be scanned when making requests before downloading anything from the network – essentially meaning that over time there will be less need to download individually because their caches already hold relevant data for new items.

Real World Inclusive

Items in the virtual context should have ties to the real world context. This is where the virtual world comes together with augmented reality to create a hybrid space. Virtual items brought out of online inventory and placed in your real world living room digitally via augmented reality. Why not? We can scan real life into the virtual world, so why not make it asynchronous?

More importantly, it’s a marketing/advertising opportunity that has yet to really blossom.

Academic, Non-Profit and Commercial Licenses

There is absolutely no reason to charge full scale commercial licensing or costs for personal or academic usage of such a system. It’s downright ignorant on all levels to lump them all together like that. In a perfect virtual environment, Non-Commercial and Academic usage of the system would be free or severely discounted while commercial usage would carry standard pricing tiers.

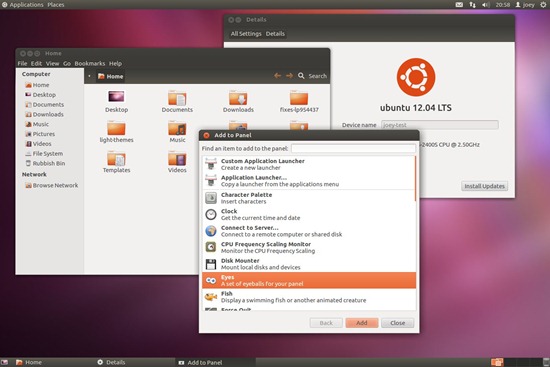

The platform that scales itself.

One system for every possible device and scenario. Not stripped down or reworked for each context. How things look on a standard computer is how it looks on a cell phone and tablet. High definition on the native client for your desktop also means high definition and nothing different for the embedded client in Facebook – aside from interface changes to accommodate usage abilities.

Procedural technologies inherently built in to allow automatic fidelity scalability into the future.

These are just some of my thoughts about what points would create a perfect virtual environment for the next phase of this industry. Clearly this isn’t something you’re going to get in the current generation such as Second Life, so the best I can hope for is the next generation to pull this stuff off.

Comment Question

What things would you like to see in a next-generation synthetic environment that would help make it perfect for you? Drop some comments below and let me know.